Three new solutions for retail’s major recruitment challenges

An average time-to-hire of 40 days. Hiring costs in excess of $2,000 per candidate. An average turnover rate of 60-70%.

The challenges of hourly recruitment in the retail industry have been well-documented.

Despite this, many of the largest companies persist with old-school recruitment processes.

Given the break-neck pace and scale of the industry, it’s hard to diagnose and fix the problem.

Understandably, many HR leaders have been quick to layer on technology solutions that seem to make things easier; in actuality, these tech solutions have added complexity, making efficiency gains difficult and actionable insights hard to find.

The big and unmanageable HR tech stack

Where recruitment is concerned, a HR tech stack tends to look like this: an unwieldy ATS, often coupled with a conversational AI or scheduling tool.

This stack is implemented across a decentralized system – hundreds of stores across the country – resulting in a situation where hiring managers are forced to use systems they don’t understand and don’t like.

The bottom line is this: Retail companies are overstacked, overworked, and need to adopt different solutions to old problems.

1. Solve high turnover through soft skill and personality trait matching

One of the biggest challenges with recruitment at major retail companies is high turnover rates. Retail staff members move fast and often, and have a high likelihood of migrating to competing businesses.

This is partially a nature-of-the-beast problem, but if we better understand what makes people tick, we can better match them to the roles at which they’re likely to succeed, and therefore keep them longer.

For example, we know that the best retail cashiers are high in extraversion. They’re energized by being around people, have good interpersonal skills, and have a lower likelihood of experiencing negative emotion while on the job.

It makes sense, then, to prioritize extraversion when matching candidates to the role of cashier. That’s a personality trait – with attendant soft skills – that will predict success for that role.

When people are matched to the job for which they are best suited, they’ll experience higher levels of purpose and satisfaction. It’s obvious why – the daily activities will invigorate rather than drain them. People who have purpose stay longer.

Therefore, if you accurately match soft skills to roles, you’ll reduce churn. Our AI Smart Chat Interviewer is really good at this: Across the board, our skill-matching power reduces non-regrettable churn by a minimum of 25%.

Side note: HEXACO is your secret weapon

If you’re keen to get started measuring soft skills, download our HEXACO job interview rubric. It features more than 20 interview questions designed by our personality psychologists to assess the skills of candidates that come your way. It will even help you figure out what soft skills are best for you based on the needs and values of your organization.

2. Reduce competition from other employees through smart employer branding

Chances are, when your employees or candidates leave, they’re probably staying within the industry – and that means they’re likely going to your competitors. It’s 2023, and the stock-standard advice would be to offer higher wages and perks.

That’s not always feasible, and besides, there’s no guarantee that doing so will markedly reduce the threat of poaching and abandonment. Money is important, but it doesn’t trump purpose and belonging.

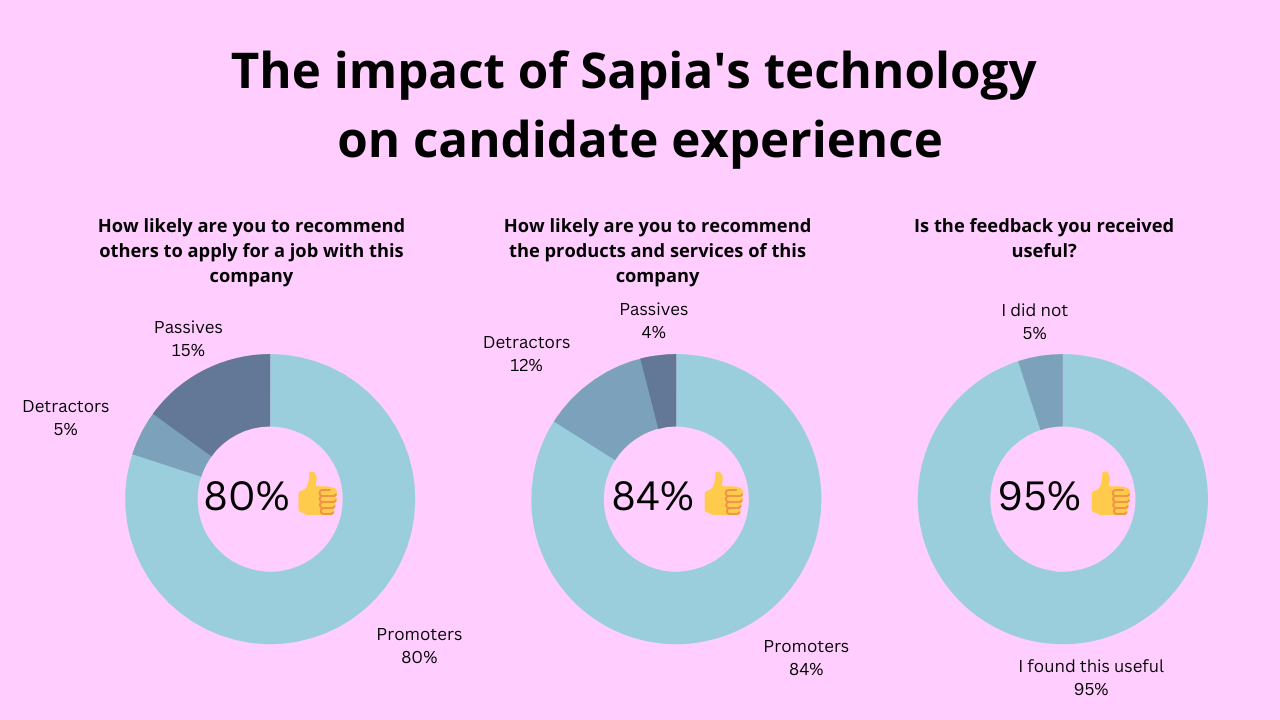

The key to better employer branding is a system for active listening. Find out what your people, be they employees or candidates, think. Ask them often. It’s important to do this at the onboarding stage, but it should continue through to the point of highest churn – the six-month mark.

Our joint report with Aptitude Research uncovered some interesting data on the importance of two-way feedback between candidates and employers.

Gathering and acting on mutual feedback:

- Boosts quality of hire from 36 to 58%, on average

- Boosts candidate experience from 34 to 44%, on average

- Improves first-year retention from 35 to 50%, on average

An NPS (Net Performer Score) framework is a good place to start. How likely are you to recommend our company to a friend or colleague?

The NPS tracking question is easily configurable and embeddable into automated emails, meaning it can be set up through your ATS with little additional work.

When you begin to analyze the data, keep things simple: Dump the data into a spreadsheet, and look at your average numbers. If your score is below 0, you’ve got work to do – if it’s 0 to +30, you’re doing well. 30+ and over, well done!

(If you’re reading this, it’s probably not likely that you’ll get a 30+ score on the first go-round. That’s okay – the goal is to find out how much work you’ve got to do.)

The benefit of benchmarking NPS is that it gives your business a single, easy-to-understand proxy for employee engagement. Once you’ve got the number, you can start to make small changes and see how that affects the overall number.

3. Increase your talent pool by limiting candidate abandonment

We hear it all the time: Sourcing is a big problem. When we ask customers about their current processes, however, a common problem emerges: We don’t really know how many people we’re losing from our recruitment funnel, and why.

This presents a great opportunity: Often, improving an application process means removing things, rather than adding them.

Conventional wisdom tells us that the longer your application and interview process goes on, the higher your dropout rate will be. But that’s a generalized issue – it tells you nothing about how to fix the problem, beyond simply making it shorter. You need specific, localized data to diagnose and fix your leakage spots.

Data from a 2022 Aptitude Research report on key interviewing trends found that candidates tend to drop out at the following stages, in the following proportions:

- 22% of candidates drop out at the application stage

- 24% at the screening stage

- 25% at the interview stage

- 18% at the assessment stage

- 9% at the offer stage

Let’s say that you had 100 visitors to your careers (or job ad) page, and 20 of them completed the first-step application form on that page. You’ve lost 80% of your possible pool right there. Not great, but at least you know – now you can examine that page to uncover possible issues preventing conversion.

Is the page too long? Does it have too much text? Is the ‘apply’ button clearly shown? Is the form too long, requiring too much information to fill out? Are your perks/EVP attributes clearly displayed?

We’ve got an in-depth guide for measuring and improving your abandonment rate here.