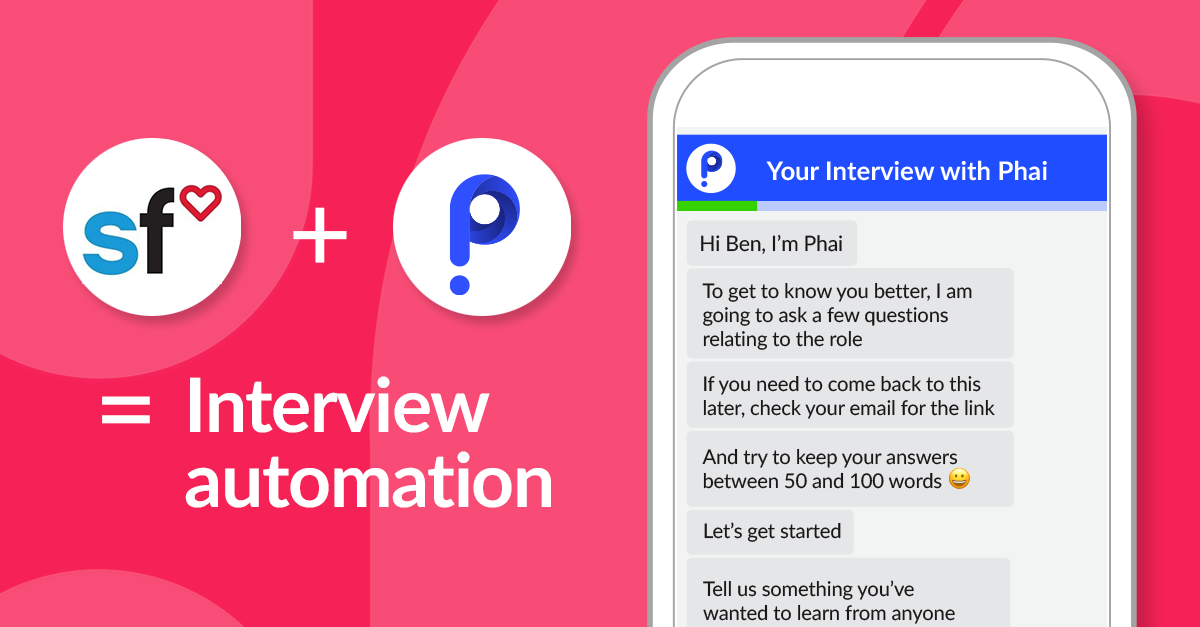

SuccessFactors + Sapia = Faster, fairer hiring

The good people at SuccessFactors have created an HR software system to help you deliver business strategy alignment, team execution, and maximum people performance. They’re passionate about helping you empower your workforce. And with Sapia, you can now take full advantage of SuccessFactors ATS to get ahead of your competitors by integrating Sapia’s interview automation for faster, fairer and better hiring results.

Decrease SuccessFactors ATS hiring complexity

From attracting candidates of diverse backgrounds and delivering an exceptional candidate experience, you’re expected to do a lot! All whilst you’re selecting from thousands of applicants…

The good news is that technology has advanced to support recruiters. Integrating Sapia artificial intelligence technology with the powerful SuccessFactors ATS facilitates a fast, fair, efficient recruitment process that candidates love.

You can now:

- Reduce your screening time by up to 90%

- Increase your candidate satisfaction to near 100%

- Achieve interview completion rates over 90%

- And reduce screening bias for good

Sapia + SuccessFactors

Gone are the days of screening CVs, followed by phone screens to find the best talent. The number of people applying for each job has grown 5-10 times in size recently. Reading each CV is simply no longer an option. In any case, the attributes that are markers of a high performer often aren’t in CVs and the risk of increasing bias is high.

You can now streamline your SuccessFactors process by integrating Sapia’s interview automation with SuccessFactors.

We’ve created a quick, easy and fair hiring process that candidates love.

- Create a vacancy in SuccessFactors, and a Sapia interview link will be created.

- Include the link in your advertising. Every candidate will have an opportunity to complete a FirstInterview via chat.

- See results as soon as candidates complete their interview. Each candidate’s scores, rank, personality assessment, role-based traits and communication skills are available as soon as they complete the interview. Every candidate will receive automated, personalised feedback.

By sending out one simple interview link, you nail speed, quality and candidate experience in one hit.

Integrate SuccessFactors and get ahead

Sapia’s award-winning chat Ai is available to all SuccessFactors users. You can automate interviewing, screening, ranking and more, with a minimum of effort! Save time, reduce bias and deliver an outstanding candidate experience.

Experience the Sapia’s Chat Interview for yourself

The interview that all candidates love

As unemployment rates rise, it’s more important than ever to show empathy for candidates and add value when we can. Using Sapia, every single candidate gets a FirstInterview through an engaging text experience on their mobile device, whenever it suits them. Every candidate receives personalised MyInsights feedback, with helpful coaching tips which candidates love.

Together, Sapia and SuccessFactors deliver an approach that is:

- Relevant—move beyond the CV to the attributes that matter most to you: grit, curiosity, accountability, critical thinking, agility and communication skills

- Respectful—give every single person an interview and never ghost a candidate again

- Dignified—show you value people’s time by providing every single applicant personal feedback

- Fair—avoid video in the first round interviews and take an approach that’s 100% blind to gender, age, ethnicity and other irrelevant attributes

- Familiar—text chat interviewing is not only highly efficient, it’s also familiar to people of all ages

There are thousands of comments just like this …

“I have never had an interview like this in my life and it was really good to be able to speak without fear of judgment and have the freedom to do so.

The feedback is also great. This is a great way to interview people as it helps an individual to be themselves.

The response back is written with a good sense of understanding and compassion.

I don’t know if it is a human or a robot answering me, but if it is a robot then technology is quite amazing.”

Take it for a 2-minute test drive here >

Recruiters love using artificial intelligence in hiring

Recruiters love the TalentInsights Sapia surface in SuccessFactors as soon as each candidate finishes their interview.

Together, Sapia and SuccessFactors deliver an approach that is:

- Fast—Ai-powered scores and rankings make shortlisting candidates quicker

- Insightful—Deep dive into the unique personality and other traits of each candidate

- Fair—Candidates are scored and ranked on their responses. The system is blind to other attributes and regularly checked for bias.

- Streamlined—Our stand-alone LiveInterview mobile app makes arranging assessment centres easy. Automated record-keeping reduces paperwork and ensures everyone is fairly assessed.

- Time-saving—Automating the first interview screening process and second-round scheduling delivers 90% time savings against a standard recruiting process.

Don’t believe us, read the reviews!

See Recruiter Reviews here >

HR Directors and CHROs love reliable bias tracking

Well-intentioned organisations have been trying to shift the needle on the bias that impacts diversity and inclusion for many years, without significant results.

Together, Sapia and SuccessFactors deliver an approach that is:

- Measurable—DiscoverInsights, our operations dashboard that provides clear reporting on recruitment, including pipeline shortlisting, candidate experience and bias tracking.

- Competitive—The Sapia and SuccessFactors experience is loved by candidates, ensuring you’ll attract the best candidates, and hire faster than competitors.

- Scalable—Whether you’re hiring one hundred people, or one thousand, you can hire the best person for the job, on time, every time.

- Best-in-class—Sapia easily integrates with SuccessFactors to provide you with a best-in-class AI-enabled HRTech stack.