How much does recruitment software cost and why it’s worth investing in 2026

TL;DR

- Treat recruiting like a revenue engine, not overhead. Model talent economics the same way you model customers: acquisition cost, time to value, and lifetime value.

- Answer the hard question “how much does recruitment software cost?” by looking beyond licence fees to total cost of ownership, including integrations, data migration, training, and time saved.

- Anchor your business case in three levers that move numbers fast: interview-first screening, automation of scheduling and nudges, and blind, rubric-based scoring.

- Use a simple ROI formula: baseline cost and time today, forecast improvements with software, and convert hours saved, faster fill, and lower attrition into money.

- Start small with an overlay to your applicant tracking system, not a rip-and-replace. Prove lift on one role family in 30 days, then scale.

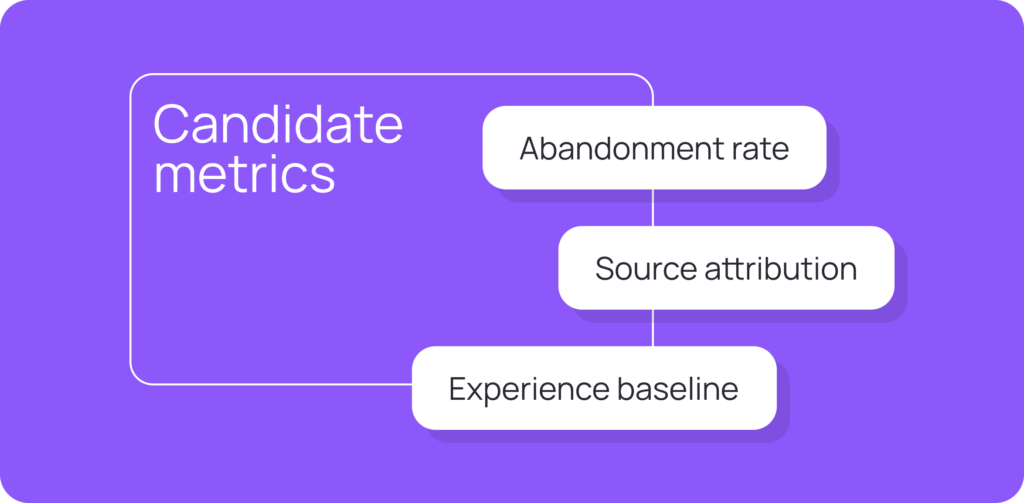

- Track a lean set of recruitment marketing metrics and hiring metrics that executives care about: time to first interview, time to offer, cost per hire, quality of hire, first-year attrition, and candidate experience.

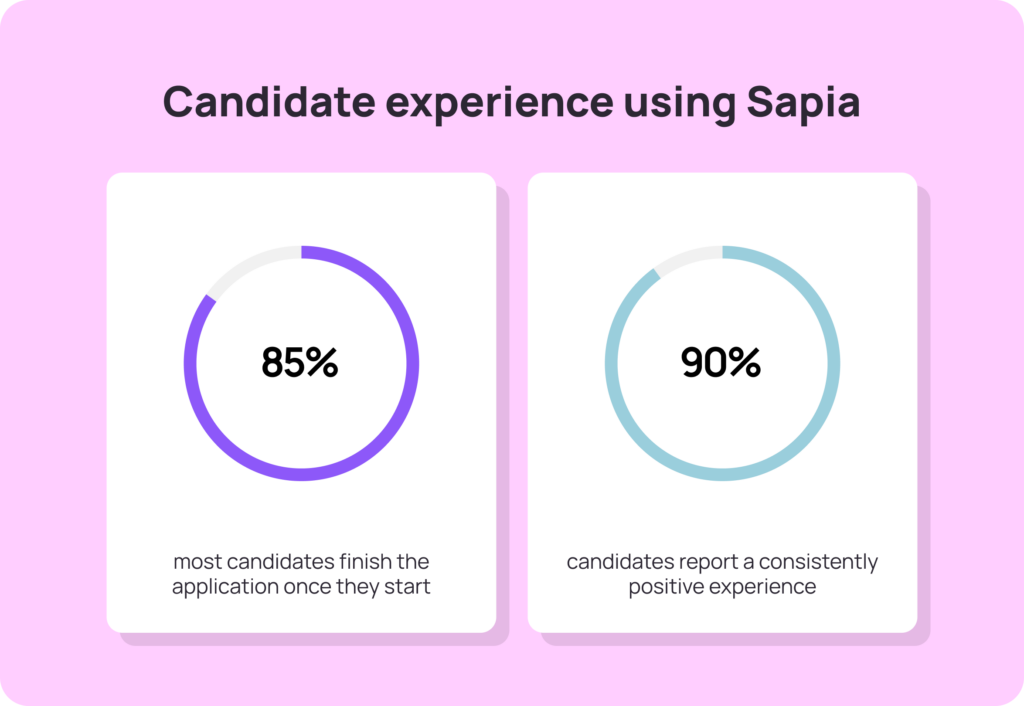

- Sapia.ai helps you run interview-first screening at apply, with blind scoring and explainable shortlists, so you reduce bias, lift completion, and cut time to hire without rebuilding your stack.

The 2026 reality: budgets are tight, expectations are higher

The last few years have been a grind for talent teams. Markets whipsawed, candidate expectations rose, and hiring managers still want quality, speed, and a great candidate experience. In this climate, recruitment is too often framed as a cost centre. Your job is to reframe it as a value generator with clear numbers that finance leaders recognise.

That starts with answering the question most executives quietly think about: how much does recruitment software cost and what do we get back for every pound or dollar spent?

Why recruiting is mislabelled as “cost”

Three reasons keep cropping up:

- Opaque outcomes. Leaders cannot see the line from recruitment activities to revenue outcomes.

- Overstacked tech. Many teams run a bulky ATS, point tools for scheduling and assessments, and spreadsheets for reporting. Costs add up without a clear value story.

- Manual work in the wrong places. Resume skims, email ping-pong, and unstructured interviews consume hours without improving hiring success.

Fixing the perception problem means fixing the operating model and measuring what matters.

What recruitment software really costs

When you are asked “how much does recruitment software cost?” talk in total cost of ownership, not just licences.

- Licences and users. Pricing is usually per user, per employee count, or per job slot. Some vendors offer a free trial or a free plan with limits on users or job postings.

- Implementation. Data migration, configuration, and SSO. Teams with expert data migration engineers and a dedicated account manager reduce risk and speed go-live.

- Integrations and add ons. Applicant tracking system connectors, calendar, background checks, and job boards. Add ons and advertising costs can dwarf the base price if unmanaged.

- Training and change. Time for recruiters, hiring managers, and HR software admins to learn new workflows.

- Run costs and support. Ongoing support plans, advanced features, and storage.

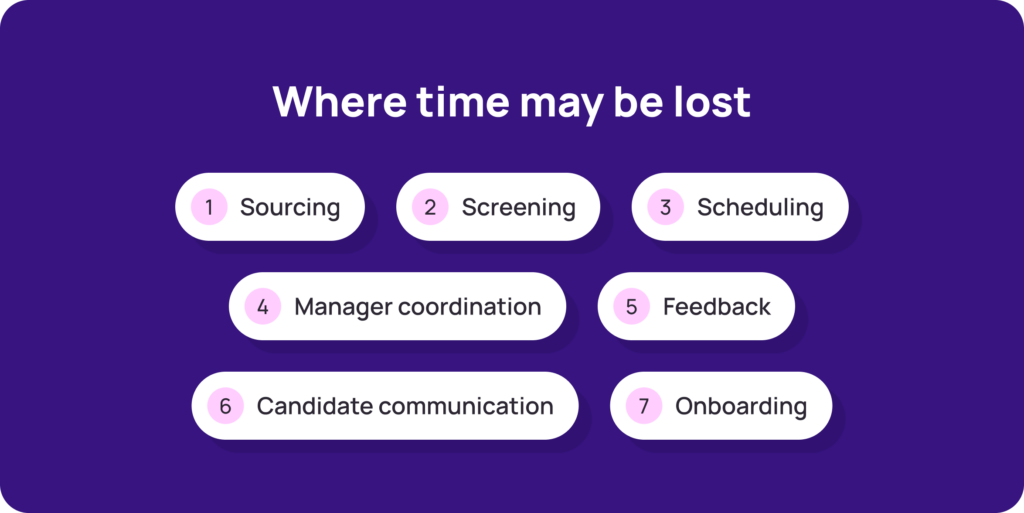

- Opportunity cost. The hours your team currently spends on low-value tasks that the platform can automate.

Frame cost alongside what you stop paying for: fewer agency fees, less overtime for small teams, reduced drop-off waste from manual processes, and lower first-year attrition.

A plain-English ROI model you can copy

Step 1: Baseline today.

- Number of hires per quarter

- Time to first interview and time to hire

- Cost per hire (internal and external recruiting costs)

- First-year attrition

- Recruiter hours spent on screening, scheduling, and feedback

- Candidate satisfaction or candidate Net Promoter Score

Step 2: Target improvements with software.

- Interview-first screening for 100% of applicants

- Automated reminders and self-serve scheduling

- Blind, rubric-based scoring with explainable shortlists

- Feedback for all unsuccessful candidates

Step 3: Convert gains into money.

- Hours saved × fully loaded hourly rate

- Days shaved off time to fill × revenue or productivity per day for the open role

- Reduction in agency spend and job boards waste

- Lower first-year attrition × replacement cost avoided

ROI = (Annual benefits – Total software cost) ÷ Total software cost.

Keep the model conservative. Finance will trust numbers you can defend.

The operating shifts that actually move the dial

If you want to start seeing results, then these three steps are where you need to begin.

1) Make interview-first your anchor

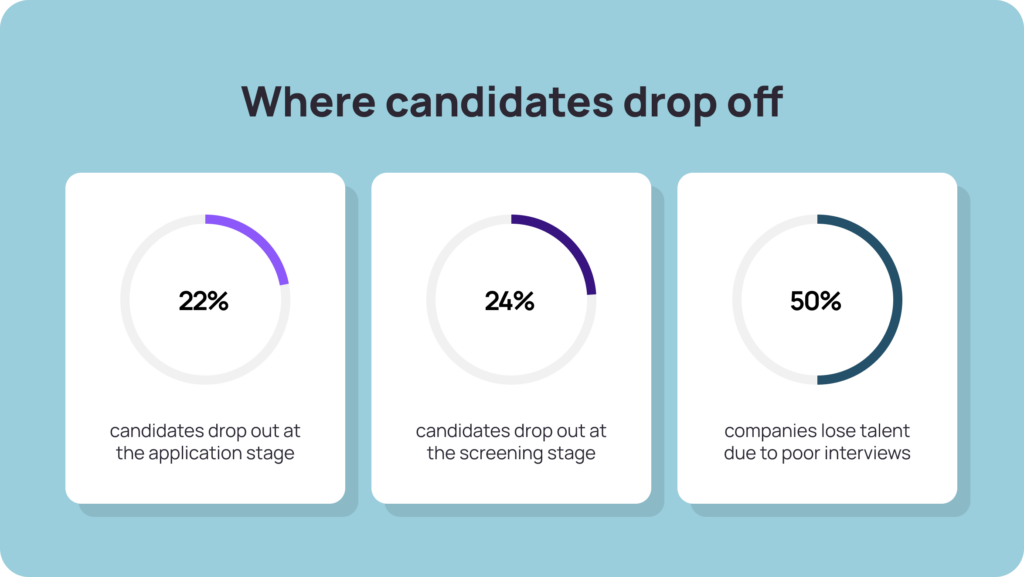

The biggest drop-off happens between apply and first response. Trigger a short, structured, mobile interview for every applicant at the point of application. With Sapia.ai, candidates answer competency prompts via chat, on their phone, in their own time. The first pass is blind to personal identifiers and scored to rubrics, giving hiring managers explainable shortlists fast.

Results you can expect to see:

- Higher apply-to-interview completion

- Fewer no-shows due to automated reminders and self-scheduling

- Shorter time to first interview and time to offer

2) Automate what humans should not do

Scheduling, rescheduling, and reminder cadences are classic automation wins. Advanced automation removes the back-and-forth without removing humanity. Candidates receive clear timelines and can move themselves to a better slot when life intervenes.

That being said, there is still a lot that can be done to make the human experience more personal. Sign up for a free download of our Humanising Hiring eBook today.

3) Standardise decision quality

Blind scoring with anchored rubrics improves fairness and consistency. That protects against unstructured interviews that shift goalposts and damage hiring success. Sapia.ai produces explainable reasoning on ranked shortlists so managers can act in one tap and you retain a defensible audit trail.

Answering the pricing question like a CFO

Executives will still ask about price. Here is a structured way to reply without promising the moon.

- Licence models you will see. Per user per month, per job slot, or priced based on employee count. Some platforms bundle an ATS with assessment tools, others overlay your existing ATS software.

- What most customers actually pay. Varies by scale, but the real driver is usage. Keep seats lean, use role-based access, and consolidate point tools.

- What matters more than price. Transparent pricing, time to value, and measurable lift on your key metrics within the first 30 to 60 days.

If you are comparing options, look for:

- Overlay model that works with your current applicant tracking system

- Customisable workflows and accessible, mobile-first candidate experience

- Advanced reporting that your stakeholders will actually read

- Ethical, AI powered candidate matching that is explainable and auditable

- Real support, not just a help centre

A 30/60/90 day plan to prove value fast

Days 0–30: Pilot and baseline

- Choose one high-volume role family and one region

- Publish structured prompts and rubrics

- Switch on interview-first at apply

- Track time to first interview, completion rate, and cost per hire

Days 31–60: Scale the proof

- Add self-scheduling and expiry-aware reminders

- Roll out explainable shortlists to hiring managers with simple SLAs

- Send feedback to all candidates, not just the successful candidates

Days 61–90: Lock in the system

- Extend to more sites or roles

- Publish dashboards for leadership

- Refresh calibration and improve rubrics using real answers

Sapia.ai follows this pattern. It overlays your ATS, delivers interview-first screening with blind scoring, and provides dashboards for time, cost, diversity, and quality of hire. You get speed without losing control.

The metrics leaders care about in 2026

Keep the scorecard short and useful.

- Speed: time to first interview, time to hire

- Cost: cost per hire, agency percentage, internal versus external costs

- Quality: quality of hire proxy, first-year attrition

- Flow: apply-to-interview completion, interview show rate, acceptance rate

- Experience: candidate experience scores and manager satisfaction

Use these to show channel effectiveness and recruitment funnel effectiveness, not just activity volume or how many candidates you sourced.

Bringing it together

Recruitment turns into a cost centre when it runs on manual effort, vague criteria, and bloated stacks. It becomes a value engine when you standardise around interview-first evidence, automate the repetitive work, and measure outcomes that finance can recognise.

If you are ready to show the numbers, start with one role, prove lift on speed and cost per hire, and publish the gains. Then scale. Want to learn more about getting started? Book a demo with Sapia.ai today.

Common FAQs about recruiting software

Choose an overlay that connects to your existing ATS via standard integrations. You keep familiar workflows and add intelligence on top.

Small teams benefit the most from automation. A light overlay that standardises interviews and automates reminders will free hours each week and lift hiring manager satisfaction.

Ask vendors about audit logs, data residency, retention schedules, and privacy-by-design. You want secure handling of candidate data and clear consent language.

No. Keep job postings live where they work for you. The gain comes from what happens after a candidate applies: structured evidence, faster decisions, and less waste.

Yes. Run a 30-day pilot on one role. Most modern platforms offer a free trial or easy proof-of-concept with a custom quote for scale.