PredictiveHire finalist for In-House Innovation in Recruitment Award at UK’s Recruiter Awards

PredictiveHire and Iceland Foods are finalists in the 2021 Recruiter Awards for the category In-House Innovation in Recruitment.

Established in 2002, the Recruiter Awards are the UK’s most prestigious honours in recruitment.

The awards recognise best practice and celebrate achievement by agencies and in-house recruiters during the prior 12 months, also throwing a spotlight on marketing and technology. The In-House Innovation in Recruitment category recognises outstanding innovation by an in-house recruitment team that has led to the achievement of strategic business goals.

The success story PredictiveHire has been nominated for starts with 2020 creating a crisis for Iceland, as it did for many. Increased trade and COVID-19 absences meant store leaders were massively drained of time, yet there was a surge in both the need to hire and the number of applicants. As it stood, the recruitment process was 100% manual, and all done at store level by store managers.

PredictiveHire’s contribution to In-House Innovation in Recruitment

Iceland had to innovate. Hiring needed to be centralised and automated.

Iceland developed a set of ‘non-negotiable’ criteria for an automated platform and began looking. They ultimately chose PredictiveHire as their interview automation partner – they loved the notion of ‘hiring with heart’.

Once PredictiveHire was selected, we had integrated with their API (Kallidus) and the applications started rolling in within four weeks. Iceland estimated in the first 4 months they saw 5x payback, 8,000 hours freed up for their time-poor store managers across the organisation, and over 50,000 applications were processed every month. They also found that 97% of candidates who followed the link to apply completed the application process and 99% of candidates reported a positive experience.

You can read the full Iceland Foods + Kallidus + PredictiveHire story here.

The award will be presented at the annual Gala in London on September 23, 2021.

The rigorous judging process is done by a judging panel of 33 credible and experienced industry professionals including Rob McCargow, Director of Artificial Intelligence at PwC UK, James Fieldhouse, M&A Managing Director at BDO, and Karolina Minczuk, Relationship Director at Natwest Bank.

Other nominees are BDO in partnership with Amberjack, BUPA, GQR, and Virgin Media in partnership with Amberjack.

About PredictiveHire

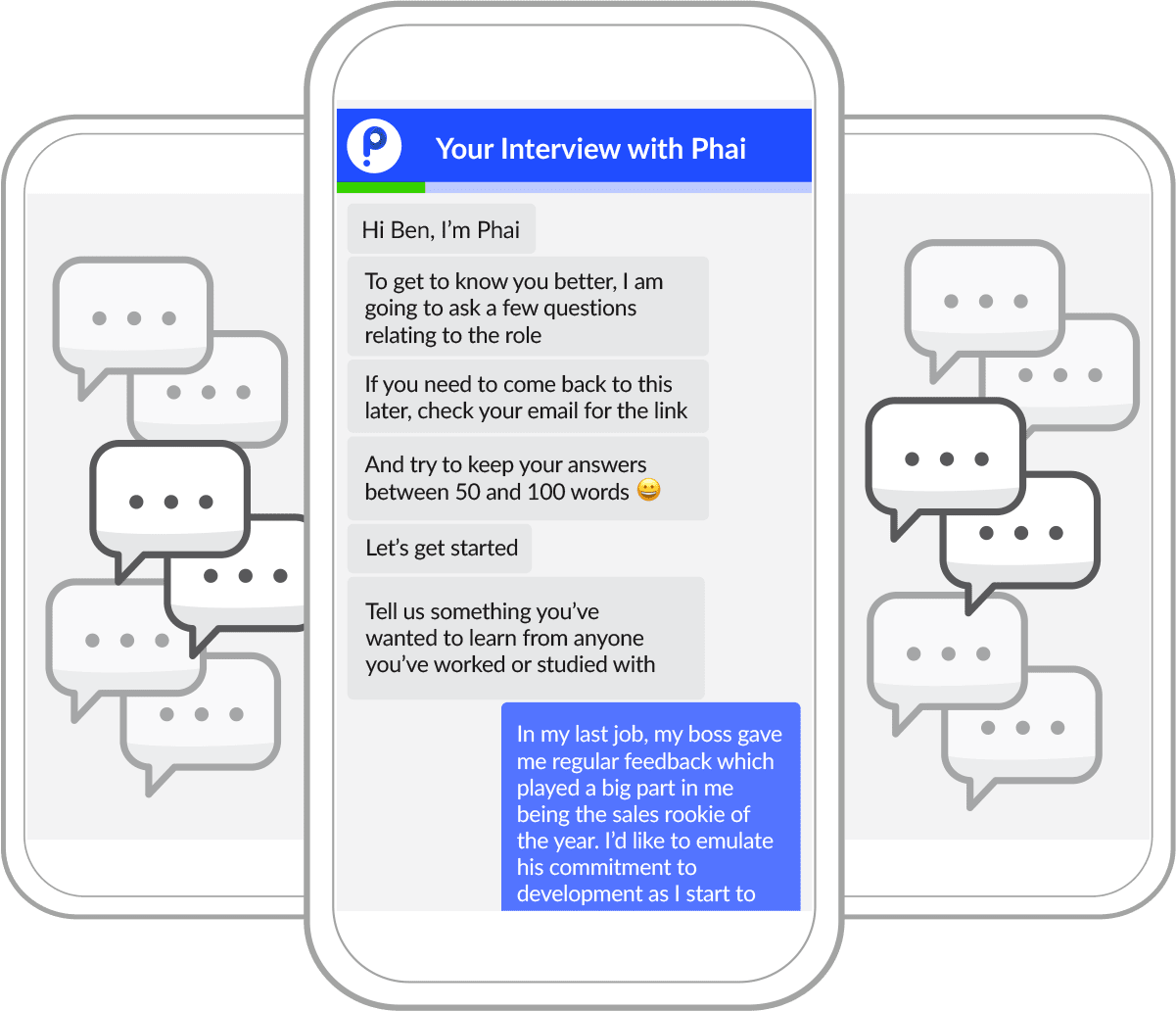

PredictiveHire is a frontier interview automation solution that solves three pain points in recruiting – bias, candidate experience, and efficiency. Customers are typically those that receive an enormous number of applications and are dissatisfied with how much collective time is spent hiring. Unlike other forms of assessments which can feel confrontational, PredictiveHire’s FirstInterview™ is built on a text-based conversation – totally familiar because text is central to our everyday lives

Every candidate gets a chance at an interview by answering five relatable questions. Every candidate also receives personalised feedback (99% CSAT). Ai then reads candidates’ answers for best-fit, translating assessments into personality readings, work-based traits and communication skills. Candidates are scored and ranked in real-time, making screening 90% faster. PredictiveHire fits seamlessly into your HR tech-stack and with it you will get off the Richter efficiency, reduce bias and humanise the application process. We call it ‘hiring with heart.’

You can try PredictiveHire here (you’ll receive real personality insights), or leave us your details for one of our team to show you through the whole product

If you’d like to stay up to date with PredictiveHire, you can subscribe to our newsletter here.