The Changing Role of the Organizational Psychologist As HR Embraces AI

Advances in technology, shifts in workplace dynamics, and evolving employee expectations are reshaping the HR landscape. At the forefront of this transformation is the integration of Artificial Intelligence (AI), including tools like ChatGPT, into the heart of HR practices. This integration raises questions about the role of organizational psychologists, and how embracing ethical AI to support people processes can foster a more dynamic, inclusive, and effective workplace.

The Urgent Need for Adaptation

Research indicates that up to 35% of job types could be replaced by machines within the next two decades, according to leading researchers from Oxford University and Deloitte. This statistic is not just a forecast, but an urgent call for organizational psychologists to explore new ways of coexisting and collaborating with this technology. It is undeniable that the practice of organizational psychology needs to adapt to this new landscape, and fast. As CHROs feel the pressure to adopt AI, the psychologists who advise them must be equipped with the knowledge and tools to help them make the right decisions about how AI is used in their people processes.

Acceptance and Flexibility: New Candidate & Employee Expectations

Employees and candidates crave flexibility, inclusivity, and personalized experiences. Traditional, top-down HR solutions fail to meet these needs, diminishing the returns that can be seen from continuing to invest in traditional HR tech stacks.

In contrast, AI offers a promising alternative, with generative AI tools like ChatGPT fundamentally changing how many of us work, learn, and stay productive. These tools enable a seismic shift from static, linear processes to conversational interactions that give individuals agency, empowering them and valuing their time.

The Role of Organizational Psychologists in an AI-enabled World

As AI becomes more prevalent in HR, organizational psychologists must augment their expertise with knowledge of the fundamentals of AI; as well as use their scientific knowledge to ensure that the AI that is being used, is responsible, ethical, and fit for purpose.

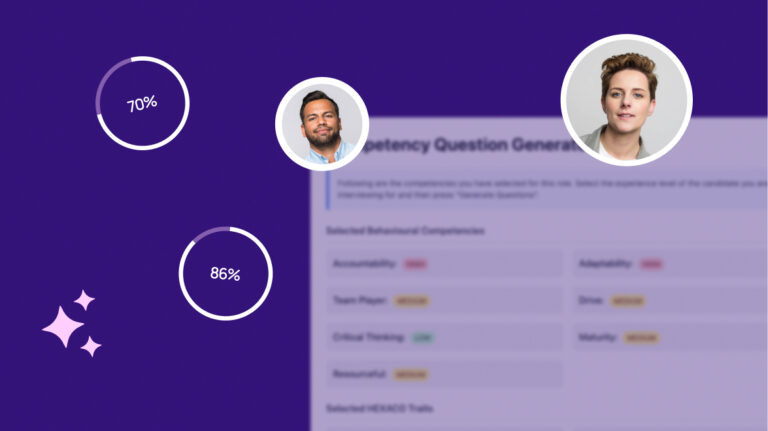

This involves understanding the science behind AI-powered tools, ensuring ethical application, and focusing on the development of systems that offer genuine value to both employees and organizations. There are three areas of impact in which organizational psychologists should already be playing a core role:

- Advocacy for Ethical AI: Just as org psychs ensure that traditional assessments or programs are responsible and ethical, now they should be championing the development and implementation of AI solutions that respect privacy, ensure fairness, and eliminate bias.

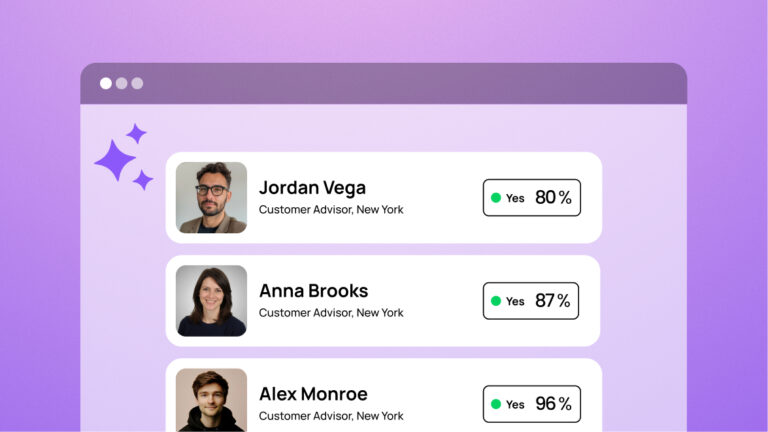

- Facilitating Human-AI Collaboration: The intersection of humans and AI is where the most exciting possibilities lie. AI can be used to enhance decision-making and productivity, without fully displacing the human element. Organizational psychologists must bring their expertise in designing these human-AI processes.

- Enhancing Employee Experience: It’s the goal of all HR departments to create personalized, flexible, and inclusive work environments that attract and retain top talent. AI can be used to help achieve these goals, and organizational psychologists should be up to date in their knowledge of which AI tools can help.

So, how can an organizational psychologist begin to equip themselves with this knowledge?

Understand the fundamentals of AI and how they’re being used in HR

Learn your MLs from your NLPS. This quick reference guide will help organizational psychologists understand a simple framework of AI terminology; and how each component can be used to optimize and enhance your HR processes.

Get acquainted with the concepts of Responsible AI

While the potential of AI is immense, organizational psychologists must also be aware of its limitations and ethical considerations. The reliance on AI must be balanced with human oversight to ensure accuracy, fairness, and the well-being of employees.

Ethical AI, and Responsible AI, they’re terms that are used a lot, however with no prior knowledge it can be difficult to know what makes an AI ‘ethical’ or ‘responsible’.

Responsible AI generally refers to the ethical, safe, trustworthy, and fair development, deployment, and use of AI systems. Ethically, the focus is on transparency, bias mitigation, accountability, privacy, accuracy, human oversight, safety, societal impact, and inclusivity. Legally, aspects such as regular audits, risk management, transparency notices, and thorough documentation stand out.

For organizational psychologists aiming to understand responsible AI use, this paper offers an overview and suggests seven essential questions to evaluate an AI solution.

Start with the business challenges

Many of us have fallen into the trap of building a business case to buy some new tech rather than building business cases around problems to be solved and then finding the right technology partner. When HR leaders start with the business problem, they measure their success in business metrics, not HR metrics.

Successful implementation of AI will start with the business challenges that need to be solved, and building a solution around that. Working with your stakeholders to ensure that any adoption of AI is centered on solving a real challenge will ensure the success of the project.

Consider how AI creates humanized experiences

Fundamentally, any new technology, AI or not, should enhance the experience of the user, whether that’s a candidate, employee, or hiring manager.

The rise of remote work and the demand for greater autonomy have clarified that connection is the new culture, and conversation is the new medium. Smart chat, powered by AI, is fundamentally different from basic chatbots. It learns from every interaction, providing personalized feedback and a human-like experience. This approach enhances engagement and fosters a culture of continuous learning and self-actualization.

Smart chat provides the opportunity for humanized experiences, at scale.

The future is here, it’s time to embrace it

As we stand on the brink of a new era in organizational psychology, it is clear that the future is conversational AI. Sapia.ai’s pioneering approach, which combines ethical AI with a deep understanding of human behavior, exemplifies how we can use technology to enhance our understanding of ourselves and others.

The role of the organizational psychologist is evolving, driven by the rapid advancements in AI and changing workplace dynamics. By embracing these changes, we can redefine what it means to work, lead, and succeed in the digital age. The future of work is here, and it’s conversational, inclusive, and intelligent.