How to measure candidate experience (And use the insights to improve your hiring process)

TL;DR: Most companies struggle to create an excellent candidate experience, despite knowing it impacts talent acquisition. Fortunately, this isn’t rocket science. In this article, we share a simple, three-step process to measure candidate experience so you can improve it accordingly.

Every company talks about the candidate experience, few actively invest in it.

According to a recent Sapia-sponsored Aptitude Research report, 68% of companies admit they have no plans to address the interview portion of their candidate experience.

Despite this, 50% of these companies know they’re losing talent due to their application and interview processes. What’s more, according to Forbes, companies that prioritize a positive candidate experience can see their average quality-of-hire improve by 70%.

Why the unwillingness to address such an important facet of recruitment? In most cases, the teams responsible for enacting change to the candidate experience are steeped in the everyday throes of talent acquisition. As such, they don’t have time to examine their processes.

Is this where you’ find yourself? Totally understandable; the current labor market is tough. If your house is on fire, you’re probably not focused on how well you treat visitors to your doorstep.

We sat down with Lars van Wieren, CEO at Starred, a candidate experience measurement tool, for an episode of our Pink Squirrels! podcast. Lars offered some practical tips on getting started with candidate experience so that your company can make a positive impression on job seekers.

Step 1: Benchmark your candidate experience

As the saying goes, what gets measured, gets managed.

Lars recommends starting with a basic benchmark for your candidate experience. This doesn’t have to be difficult, and you don’t need a fancy candidate experience tool to start gathering data.

Simply have your hiring team ask candidates the classic Net Performer Score (NPS) question, “On a scale of 1 to 10, how likely are you to recommend our company to a friend or colleague o?

Ideally, you should gather feedback on your candidate experience at each stage of the application process. But to begin with, ask the question at the very end of your entire hiring process.

To get the best, least-biased data, you need to ask all applicants, whether or not they’ve been shortlisted or hired. If you only ask highly qualified candidates, or the few people who have received a job offer, you’re likely to get magnanimous results that don’t reflect your true candidate experience.

The NPS tracking question is easily configurable and embeddable into automated emails, meaning it can be set up through your ATS with little additional work.

Keep things simple when you begin to analyze the data. Just dump the data into a spreadsheet and look at your average numbers. If your candidate net promoter score is below 0, you’ve got work to do. If it’s between 0 to +30, you’re meeting candidate expectations. And if it’s above 30, well done!

(If you’re reading this, you probably won’t score above 30 on the first go-round. That’s okay. The goal is to find out how much work you have to do. This kind of candidate feedback will help.)

The benefit of benchmarking NPS is that it gives your business a single, easy-to-understand proxy for the health of your candidate experience. Once you’ve got the number, you can start to make small changes to your recruitment process to see if you can improve it

For example, you might consider the following changes to level up your candidate experience:

- Maybe we don’t need to ask for cover letters. Most candidates don’t like writing them (statistically speaking), and they’re a time-suck for our team.

- Maybe we can shorten the content on our careers page. It’s long and difficult to navigate.

- Maybe we should consider using Easy Apply functionality.

- Maybe we are running too many interviews in our process. Let’s do fewer and see how that works.

At the same time, look at your candidate abandonment rate. Candidate experience scores and abandonment rates are almost always linked. Improve one, you improve the other.

Step 2: Gather data at each step of your job application process

Our joint report with Aptitude Research uncovered some interesting data on the importance of two-way feedback between candidates and employers. Specifically, gathering and acting on mutual feedback:

- Boosts quality of hire from 36% to 58%, on average

- Boosts candidate experience from 34% to 44%, on average

- Improves first-year retention from 35% to 50%, on average

To ensure your feedback is accurate, gather it at each stage of the application process. The six stages are: application, screening, interviewing, assessment, offer, and rejection. By doing this, you’ll know exactly where your candidate experience is lacking, and you can make fast, effective changes.

Multi-step candidate experience surveys may not be easy to do with your current setup. But they are relatively simple to configure if your ATS/chosen software solution has the capability. So, make sure you add candidate surveys to your hiring strategy—and actually act on the data collected.

Step 3: Get your team around the candidate experience data

Creating an exceptional candidate experience is the job of your entire talent acquisition or recruitment team. But it’s a good idea to appoint an internal candidate experience champion; someone who is responsible for collating the benchmark data and consistently reporting on it. (Note: If you don’t have an internal champion at this time, the task should fall to your hiring manager.)

What’s the proper reporting cadence? It depends on the number of applications you have and the length of your application process. A monthly update works best for most companies as it gives them an insightful trendline without becoming overwhelming. Just make sure you track key metrics like application drop off rate, time to hire, offer acceptance rate, and candidate net promoter core.

While the task of improving candidate experience is never done, it shouldn’t require a complete overhaul to your recruitment process. Start small, make iterative improvements over time, and focus on making at least one more candidate smile.

Achieve higher candidate satisfaction scores

Applicants remember poor candidate experiences. Over time, your poor company reputation will become known, and it will be extremely difficult to land top talent. People will see your job posts and automatically skip them because they don’t want to subject themselves to a subpar hiring process.

Good news: the tips in this article will help you measure candidate experience. Once you know where your company stands, you can improve your approach to recruitment. This is essential in today’s competitive job market, where the best employees are the difference between success and failure.

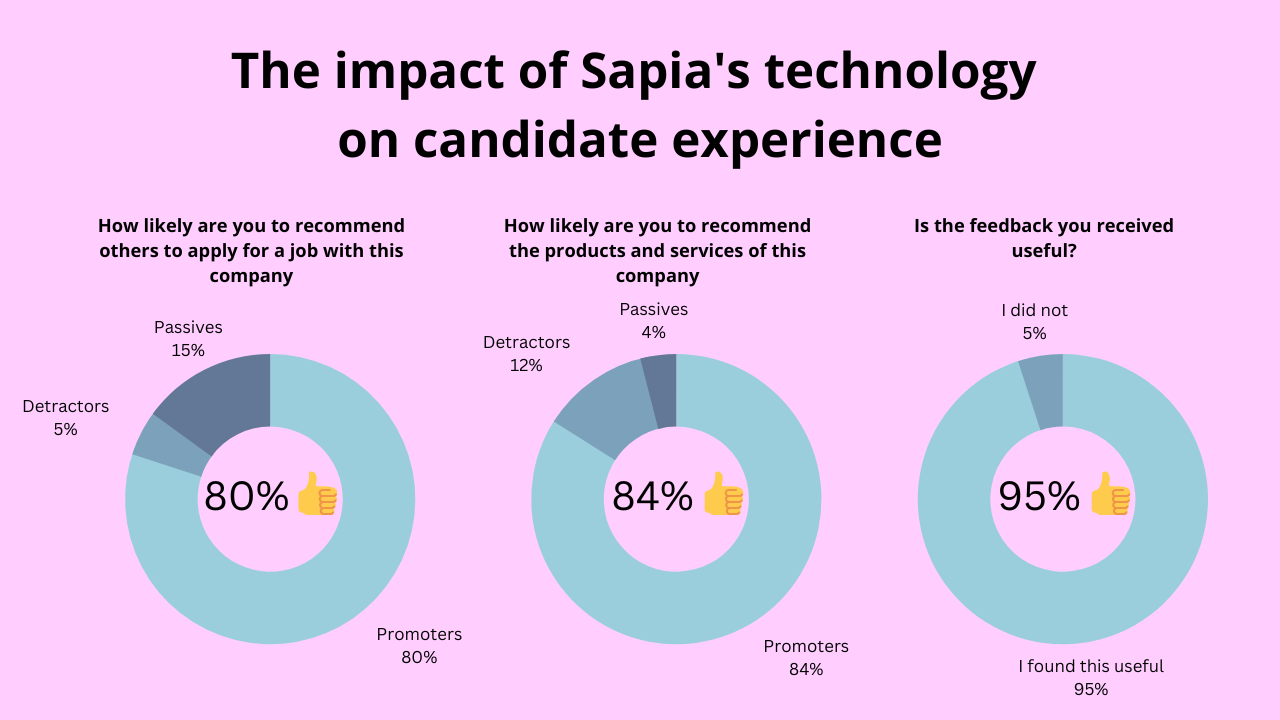

Looking for tools to help you level up your candidate experience. Try Sapia! Our AI Smart Interviewer automates scheduling, screening, interviewing, and assessing tasks so that you can connect with better applicants in less time.

Just as important, the process is ideal for job seekers as our platform automatically provides feedback post interview. This helps candidates perceive your brand in a new, better light, while helping them perform better in future interviews.

Book a demo of Sapia today to see if it’s the right tool for your hiring team.

FAQs about a building positive candidate journey

A poor candidate experience damages your employer brand and makes it harder to attract top talent. Candidates talk about their experiences, and negative reviews spread quickly through professional networks and review sites. Companies with strong candidate experiences see higher-quality hires, better retention rates, and stronger recruitment metrics overall.

Yes, your company should give candidate feedback. Providing feedback shows respect for candidates’ time and effort, helps them improve for future opportunities, and positions your company as thoughtful and professional. Plus, rejected candidates who receive constructive feedback often maintain positive feelings about your brand and may refer others or reapply later.

Try Sapia’s AI-powered talent intelligence platform that improves candidate experience through chat-based structured interviews, automated screening and scheduling, and actionable insights. Our system also provides automatic feedback to candidates post-interview, which candidates love, and uses validated data science to create competency models for each role, which saves hiring managers time.