Exploring HireVue AI: Does AI Reduce Bias in Hiring?

HireVue, an AI-driven recruitment company, has recently been taken to the US Federal Trade Commission with a prominent rights group claiming unfair and deceptive trade practices in HireVue’s use of face-scanning technology to assess job candidates’ “employability”.

It’s just the latest concerning story around the use of AI in HR practices, and it would seem quite reasonable to sit on the side of extreme caution.

In HireVue’s case, they claim to use AI to analyse video interviews to ascertain from data points like a person’s speaking voice and facial movements, about things such as their willingness to learn, and personal ‘stability’.

One of the most compelling projects to expose the flaws in this sort of biometric screening is Melbourne University’s Biometric Mirror, which uses AI to display people’s personality traits and physical attractiveness based solely on a photo of their face.

Beyond being just a little insulted by its assumptions, Biometric Mirror highlights the potential real-world consequences of algorithmic biases which are justifiable concerns for our time.

Where we land up though, is that AI is tarnished with a broad brush as the source of this amplification of bias, which essentially is what both HireVue and Biometric Mirror are doing, whether HireVue admits it or not.

Depending on which media you read, technology, and specifically AI, will create or destroy thousands of jobs. However, there is no doubt it is already radically changing many, as well as how we apply and hire for them.

The issue here is that AI is not the problem, and in fact when it comes to hiring specifically, AI is the only reliable way we ever have of removing bias in recruiting. It’s important we understand the implications of fearing this technology, which ultimately will result in a massive lost opportunity for us to improve the livelihoods of many.

In the case of HireVue, using video is an obvious problem as a data source for reasons around race and gender and their associated biases, but you might be surprised to know that CV’s are just as bad and in much broader use by many organisations as a first parse for algorithms to assess a candidate’s suitability.

Recently, Amazon analysed 10 years of CV data to build a predictive model to help filter through hundreds of thousands of applications to work at the company. The sample group was mostly male, so the model built off this training data naturally ended up mirroring that sample group, which meant it preferred male CVs to female CVs.

My company, Sapia, has done its own research and recently analysed ~13,000 CVs received over a 5 year period, all for similar roles for a large sales-led organisation, and found that it’s unscientific to use CV data to choose good job candidates.

What CVs do have going for them is that they are text-based – this is an important distinction as text as a data source for AI is understandable and transparent.

What we need to make sure though is that this data is free from historical bias – for it to be “clean” or come from a neutral point of input. Can such a platform exist? I believe it can, and I believe it can change what is to be truly ‘humane’ when hiring, and I’m not just talking about removing age, name, and gender from CV’s – that isn’t enough in itself.

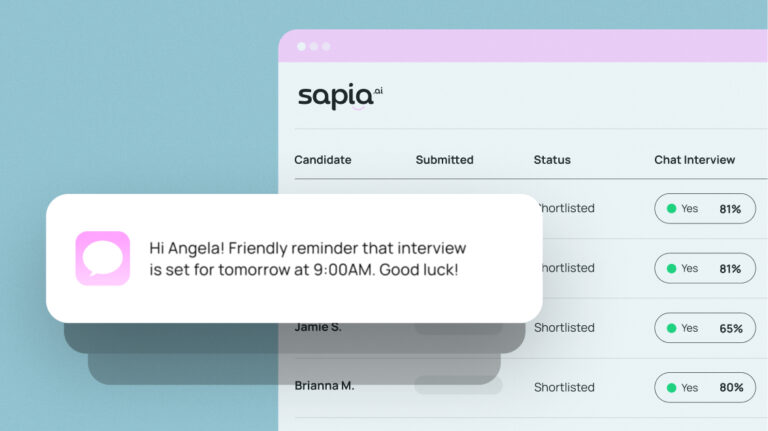

At Sapia, working with dozens of companies across the world to help blind screen thousands of candidates, we know that it’s the behaviours and values of a potential co-worker that will influence their performance and tenure. Values, such as commitment and attitudes are invisible in a CV. We use text-based questions to understand motivations and behaviour in a way that we’ve proven removes bias amplification.

We’ve had 60-year-olds successfully apply and be hired by large corporations, who would admit that these stellar candidates might otherwise have been overlooked. We’ve seen introverts become star salespeople – a trend we are now picking up across other successful candidates.

So let’s try to look beyond the headline, which naturally attracts attention when it paints AI as the bogeyman.

Algorithms can be tested for bias and can be trained to remove bias, where humans, truthfully can’t be.

We have this once-in-a-millennium opportunity to extend and enable better, fairer thinking through careful and conscious AI-assisted decisions. Let’s not blow it through our own bias against the very technology that can enable this change.