Beyond the checkbox: Rethinking human-in-the-loop for AI in hiring

Rethinking human-in-the-loop for AI in hiring

TL;DR

- At high volume, traditional human-in-the-loop hiring is performance theatre, as most reviewers simply rubber-stamp outputs without the context to challenge them.

- Effective oversight requires different human roles at different stages. For example, experts to encode competency frameworks before deployment, ethicists to define guardrails, and recruiters to apply contextual judgment where it matters most.

- Transparency is an architectural choice, not a feature to add later. Systems must show their reasoning so human review is genuine, not performative.

- The EU AI Act, NYC Local Law 144, and GDPR all impose concrete obligations on AI used in the recruitment process. As such, compliance requires explainable systems.

The scalability paradox

Imagine an organisation that screens candidates by the tens of thousands a year, with each requiring at least three minutes of meaningful human review. This task would demand hundreds of hours of expert cognitive labour per job posting to ensure recruitment efficiency.

At that scale, human-in-the-loop as it’s traditionally conceived is performative theatre. Recruiters will rubber-stamp hundreds of AI-scored candidates in a single afternoon, clicking through scores without the context to challenge them. They won’t have time for anything else.

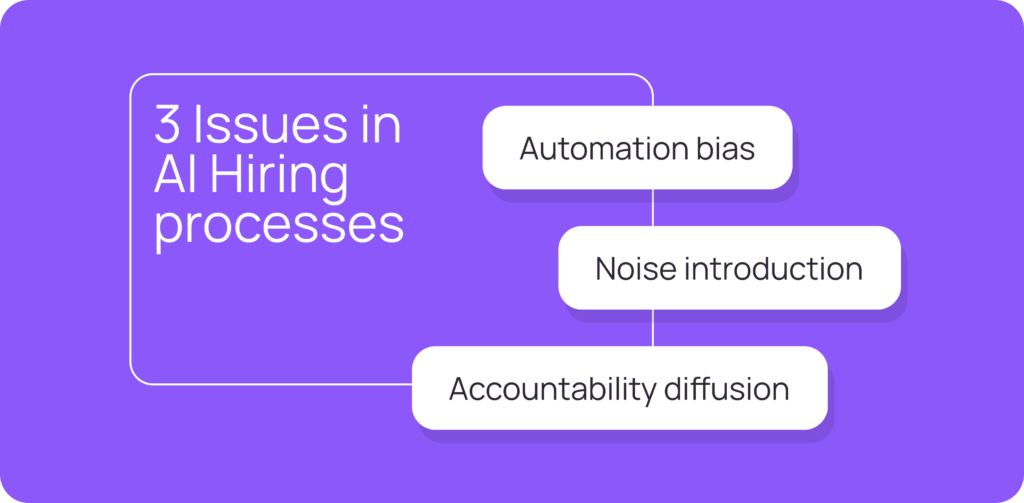

Recruiters encounter three issues when they bolt human oversight onto AI hiring processes:

- Automation bias: Reviewers defer to AI outputs rather than conduct real evaluations

- Noise introduction: Unstructured human judgment adds variance rather than correction

- Accountability diffusion: Both the AI and the human using the AI are “in the loop,” but neither is responsible for outcomes, so the process runs unchecked and is ineffective

From oversight to architecture

This is where subject matter experts, like organisational psychologists, talent acquisition professionals, AI ethicists, and hiring managers, have a pivotal opportunity.

Not as gatekeepers who review every output, but as architects who shape how expertise is encoded into hiring systems that can scale for high-volume needs. It’s about training AI to produce better outputs and solicit human interaction when necessary.

The shift requires human-in-the-loop (HITL) to become an intentional system design, not a basic add-on due to ethical implications. In other words, you need to ask the question “Where, how, and for what purpose should human expertise intervene?” instead of, “Should humans be involved at all?”

Effective HITL requires different people at different stages. Organisational psychologists and domain experts shape how systems are built. AI ethicists define boundaries and monitor for fairness. Hiring managers and recruiters apply contextual judgment where it matters most. Each role only works when systems are designed for meaningful participation from the start.

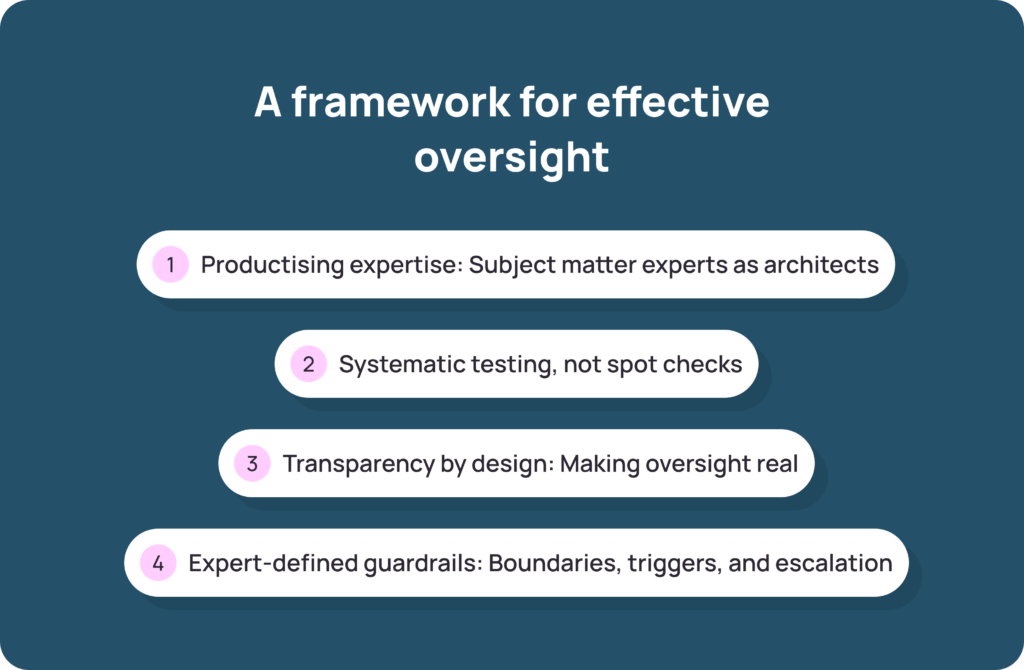

A framework for effective oversight

Here are four principles where human expertise creates genuine value in AI hiring.

1. Productising expertise: Subject matter experts as architects

The best human contributions happen before job seekers apply. Subject matter experts, from organisational psychologists to your company’s current employees, work with AI engineers to define competency frameworks, behavioural indicators, and evaluation criteria.

This knowledge transfer shapes how systems reason. When an expert defines what “accountability” looks like for a customer success role versus a finance role, their judgment propagates through every assessment the system makes in the future.

Once encoded, expertise remains visible, open to challenge, and refinable over time. This is why AI system design for the hiring process requires more than engineers and data scientists.

2. Systematic testing, not spot checks

Before deployment, experts should evaluate outputs against representative test cases that cover diverse scenarios, edge cases, and protected group representations.

When users compare AI-generated competency recommendations to expert consensus, discrepancies turn into learning opportunities. Where does the AI align with human judgment? Where does it diverge? Why? This iterative refinement builds confidence that the system behaves as intended before it interacts with qualified candidates.

Addressing potential biases at this stage is far more effective than correcting them after go-live. Responsible AI in hiring means building fairness into your process, not auditing for it afterwards.

3. Transparency by design: Making oversight real

You should build systems with visible reasoning chains.

Put another way, every score should come with an explanation that relates to specific behavioural evidence. This transforms oversight from rubber-stamping into a genuine review.

When a recruiter can see why a candidate scored as they did (because certain behavioural indicators were either present or absent), they can exercise informed judgment. Then, when Human Resources reviews talent insights, they can identify where their contextual knowledge should complement or override what the AI makes visible to guarantee the best hiring decision.

This is what Sapia.ai operationalises through our FAIR framework, which requires all AI systems used throughout the hiring process to be unbiased, valid, explainable, and inclusive.

- Unbiased: Outcomes must not favour groups defined by protected attributes

- Valid: The AI used in the hiring process must demonstrate criterion validity

- Explainable: There must be documentation to help interpret individual outcomes

- Inclusive: Employers must treat all candidates the same, regardless of background

Where other tools offer glossy claims, the FAIR framework gives risk teams the documented transparency they need to evaluate what the system actually does.

4. Expert-defined guardrails: Boundaries, triggers, and escalation

Guardrails should not be generic safety nets. Instead, they should be expert-specified boundaries with defined interventions for the AI system and its human counterparts to follow.

AI ethicists and compliance teams define what the system should never infer or recommend. Domain experts specify triggers for human escalation, like when confidence scores fall below thresholds, candidate responses flag anomalies, or edge cases require a judgment call. Clear escalation paths ensure the right expertise gets applied to the right situations.

This transforms AI governance from reactive to proactive, allowing your hiring team to define when to require human judgment, then route decisions accordingly.

What this looks like in practice with Sapia.ai

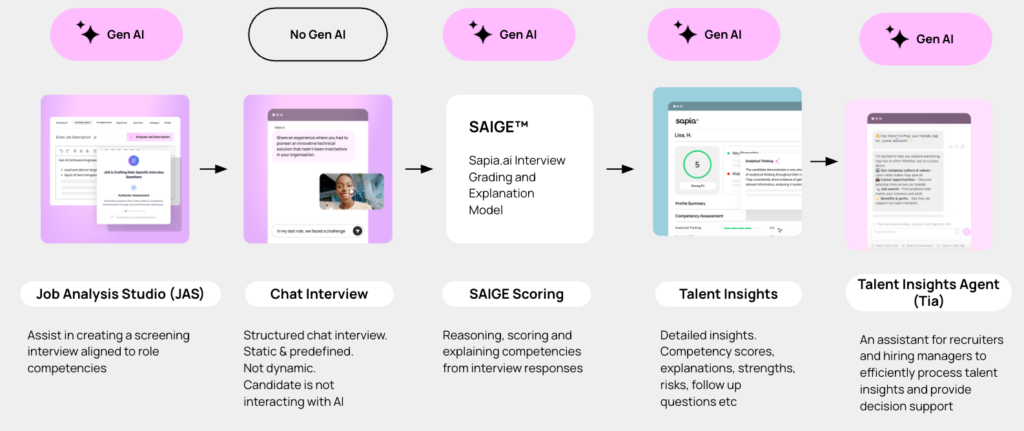

We designed Sapia.ai so that each stage of the AI hiring workflow supports meaningful human involvement from the very beginning, not as an afterthought.

For example, the Job Analysis Studio shifts the expert’s role from conducting every job analysis themselves to defining the framework the AI uses. Then, the expert simply determines if the AI’s recommendations make sense for specific contexts. Meanwhile, talent acquisition professionals retain control over the competency frameworks that drive every downstream assessment.

Then there’s our Talent Insights feature, which surfaces evidence-based candidate profiles where every score traces back to specific behavioural evidence from the AI Chat Interview process. This makes candidate scores both visible and explainable to the recruiter reviewing them.

Plus, our Talent Intelligence Agent, named Tia for short, structures insights for hiring managers so human judgment can focus on the things AI can’t assess, like team dynamics, role nuances, and a specific candidate’s potential beyond their demonstrated competencies.

Finally, our Interview scheduling tool removes administrative overhead so recruiters can spend their time on high-value activities, like building relationships with more candidates.

As you can see, every tool that Sapia.ai offers uses AI to streamline low-value tasks, while giving humans the ability to display their unique judgment and add genuine value.

The result? A streamlined process that provides better candidate experiences to top talent and enables you to make better hiring decisions—all without the potential risks.

The regulatory context HR teams are operating in

If you’re in talent acquisition or human resources, the framework described earlier isn’t a nice-to-have philosophy. The regulatory environment around AI in hiring is tightening, and fast.

For example, the EU AI Act classifies AI systems used in employment as high-risk, with obligations around transparency, human oversight, and documentation. Then there’s New York City’s Local Law 144, which requires automated employment decision tools to undergo independent bias audits and disclose their use to candidates. Also, the UK GDPR law places obligations around automated decision-making and candidates’ right to human review.

Good news: Sapia.ai is compliant with NYC Local Law 144 and aligned with the EU’s AI Act requirements. Other tools that can’t demonstrate documented compliance carry real legal and reputational risk. This is something to consider when hiring in the age of AI.

Choosing the right AI tools

Whether you are an HR leader evaluating vendors, an organisational psychologist assessing AI models, or a risk team reviewing its screening process, you need to know:

- Does the system show its reasoning?

- Was domain expertise involved in designing the competency frameworks?

- Are guardrails explicit and expert-defined?

- Can reviewers meaningfully evaluate AI outputs, or are they rubber-stamping?

- Does the platform comply with the EU AI Act, NYC Local Law 144, and UK GDPR?

- Is there independent documentation of bias testing across protected groups?

Tools built for transparency, where reasoning chains are visible, competency frameworks are explicit, and explanations trace back to behavioural evidence, enable genuine oversight. Tools that treat human-in-the-loop as a checkbox only create the illusion of accountability.

The workflow below maps the full journey from job analysis to hiring decision support within Sapia.ai. Each stage shows where Gen AI is active, where it is deliberately absent, and where we intentionally built human judgment into the process.

This is what the four principles above look like when they move from theory into a functioning recruitment system that eliminates repetitive tasks and enables recruiter efficiency through the power of large language models and natural language processing.

The stakes are clear

Your hiring decisions will shape your organisation for years.

As AI increasingly mediates the recruitment process, TA leaders and HR transformation heads face a concrete choice: build for human oversight or accept systems that merely claim it. Subject matter experts across the hiring ecosystem have the responsibility to demand the former.

Sapia.ai enables you to build an AI-powered hiring process that includes human oversight from the very beginning. As such, our platform helps you make better hiring decisions in less time.

Book a demo to see how we build human oversight into every stage of your hiring workflow.

FAQs about artificial intelligence in hiring

Human-in-the-loop (HITL) refers to human involvement at one or more stages of an AI-assisted recruitment process. Its value depends entirely on design. Effective HITL means the right human roles intervening at the right stages. For example, subject matter experts shape system design, ethicists define guardrails, and recruiters apply contextual judgment where it matters most.

Bias can enter through workforce data that reflects past hiring decisions, algorithms that favour or penalise protected groups, and reviewers who defer to AI scores without questioning them. Aim for representative historical data, validated competency frameworks, systematic bias testing before deployment, and transparent tools that enable real human review.

Developed by Sapia.ai, the FAIR™ framework (Fair AI for Recruitment) requires AI hiring systems to be unbiased, valid, explainable, and inclusive. For risk and compliance teams, it provides a structured and documented basis to evaluate if an AI hiring tool is trustworthy.

Look for independent bias audits, documented compliance with the EU AI Act and NYC Local Law 144, visible reasoning chains, and audit trails for compliance reporting. Sapia.ai published an independent bias audit and maintains compliance with both frameworks.

Every score should trace back to specific behavioural evidence, and competency frameworks should be visible and customisable. In addition, recruiters need reasoning chains they can evaluate. Finally, demand population-level analytics to monitor for drift. These things will ensure compliance and guard against both AI and human biases. Sapia.ai’s Talent Insights and Discover Insights meet these requirements.

AI screening, assessment, interview scheduling, and decision support are now a regular part of the recruitment process. The risks fall into three categories: fairness risks (biased outcomes for protected groups), compliance risks (non-compliance with the EU AI Act, NYC Local Law 144, or UK GDPR), and operational risks (poor system design that erodes hiring quality). Each risk correlates directly with how well you design and validate the system.

The EU AI Act classifies employment AI as high-risk, requiring transparency, documentation, and human oversight. NYC Local Law 144 mandates annual independent bias audits and candidate disclosure. UK GDPR requires candidates’ right to human review of automated decisions. There’s also the Equal Employment Opportunity Commission (EEOC) in the U.S. that deals with DEI-related employment discrimination. Every organisation should assess tools against all applicable frameworks and select vendors with documented compliance to eliminate existing bias.