AI in talent assessment: 6 validated approaches for better selection

TL;DR:

- AI in talent assessment applies automation and machine learning to deliver and score structured assessments while preserving human judgment for final decisions.

- Interview-first matters because front-loading comparable, job-related evidence at the apply stage eliminates CV-based gatekeeping, so you can shortlist on merit.

- The outcomes: Organisations report a fairer process than traditional resume screening, higher predictive validity for job success, and reductions in time-to-hire.

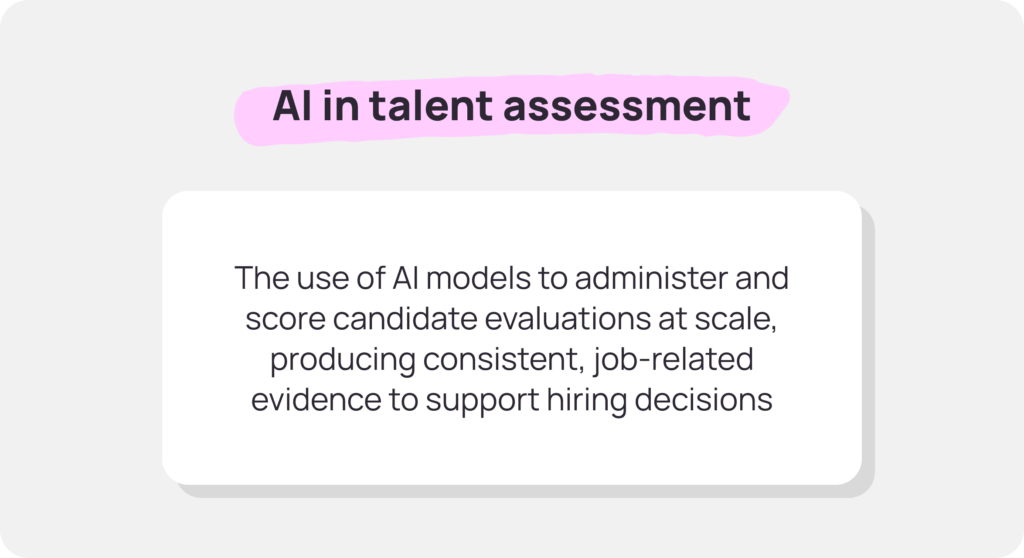

What do we mean by AI in talent assessment?

Artificial intelligence (AI) in talent assessment uses automation and models to deliver and score structured interviews, skills tests, work samples, and simulations at scale.

Thanks to natural language processing (NLP), AI candidate assessment tools handle the repeatable, evidence-gathering work that typically consumes recruiter hours. Tasks like administering prompts, applying scoring rubrics, flagging high performers, and surfacing comparable data.

Human oversight is still needed to review shortlists, conduct live conversations, and make final offers. But the right AI tools speed up the process and lead to more accurate hiring decisions.

One more thing: Talent assessment AI sits squarely in the apply-to-shortlist funnel. AI-powered candidate assessment excels when volume is high and consistency matters: initial screening, first-pass interviews, competency checks, knockout assessments. As such, this AI technology complements live interviews and hiring manager evaluation. It doesn’t replace these things.

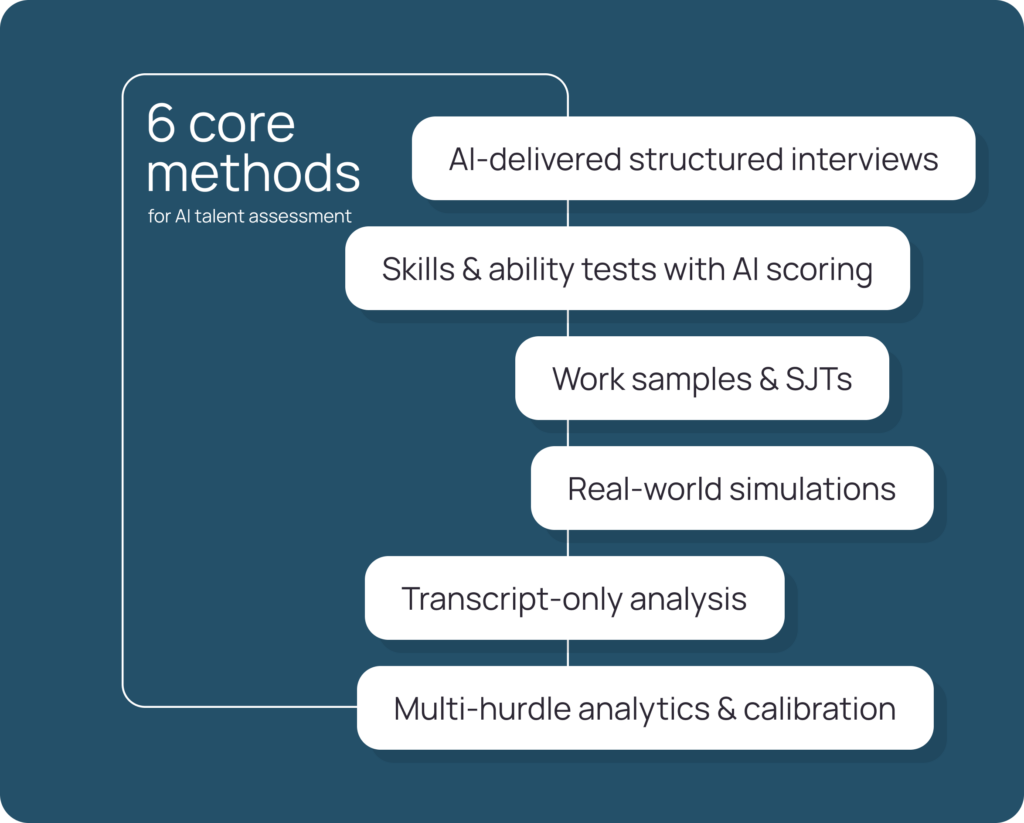

The 6 validated approaches (and where they shine)

These six approaches work. Choose based on your volume, the role’s technical versus behavioural demands, and whether you’re assessing entry-level hires or experienced professionals.

1) AI-delivered structured interviews (interview-first at apply)

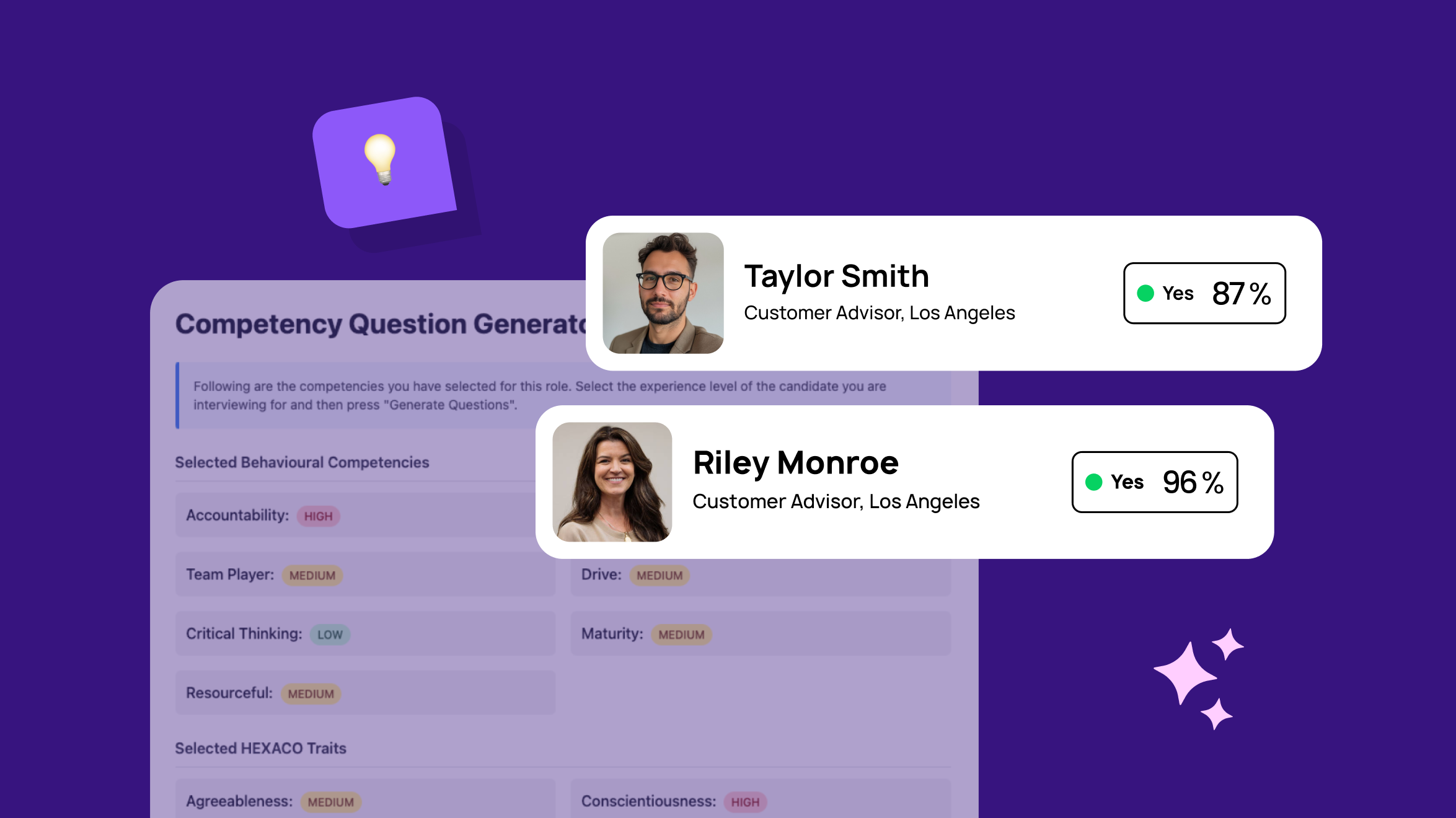

Short, mobile-friendly chat interviews that use standardised prompts and anchored rubrics to collect comparable evidence from applicants and assess candidate quality.

First, applicants respond to job-related behavioural or situational questions. Then, AI scores answers against pre-defined criteria and recruiters review scores blindly to reduce bias.

This approach is best for high-volume roles where you need comparable evidence early—customer service, sales, graduate programmes, retail, contact centres, etc.

Solutions like Sapia.ai overlay your ATS to run interview-first workflows, apply blind scoring, and produce explainable shortlists at scale without replacing your existing tech stack.

2) Skills and ability tests with AI scoring

Job-related micro-assessments that relate to numeracy, writing, problem-solving, job knowledge, etc., that are then scored consistently by AI models to find qualified candidates.

Said assessments are often time-boxed to avoid candidate fatigue and designed to measure specific technical skills or cognitive abilities that correlate with role performance.

This approach is best deployed in situations where validity is proven for the role and you can demonstrate clear job-relatedness. We suggest making alternative tests available for accessibility and preparing to show adverse-impact monitoring if/when necessary.

3) Work samples and situational judgement tests (SJTs)

Candidates tackle role-realistic tasks or navigate scenario-based trade-offs.

After, AI-powered assessment tools score responses against rubrics that capture judgment, prioritisation, and values alignment, not keyword matching. Since these assessments mirror actual job demands, they’re highly predictive and make reducing time to hire a real possibility.

This approach is strong for customer service, operations, sales, and graduate hiring. Especially when you need evidence of applied judgment under realistic constraints.

4) Real-world simulations

Candidates interact with a realistic environment. Then, AI summarises observed behaviours against competencies like prioritisation, empathy, compliance, and decision-making.

This approach is ideal for contact centres, logistics dispatch, retail leadership, and any other role in which the job description calls for real-time judgment and multitasking skills.

5) Transcript-only analysis for video/audio steps

Captures candidate responses via video or audio, but uses machine learning algorithms to only score the transcript content, minimising appearance and accent bias.

(Note: We recommend human reviews for edge cases and ambiguous responses. Also, let candidates choose audio, video, or text-based responses to suit their comfort and access.)

This approach preserves the richness of open-ended responses while reducing the impact of irrelevant factors. That way, you can make informed hiring decisions.

6) Multi-hurdle analytics and calibration

Combine signals—like a structured interview and a work sample and knockout compliance checks—with transparent, pre-set weights. Then, monitor adverse impacts, track inter-rater reliability, and recalibrate quarterly based on hiring outcomes and future talent needs.

This is a top approach if you want to build governance into your system. We’re talking about audit trails, rationale capture for borderline decisions, and clear data retention policies. These things make candidate assessment AI tools a managed, accountable process, not a black box.

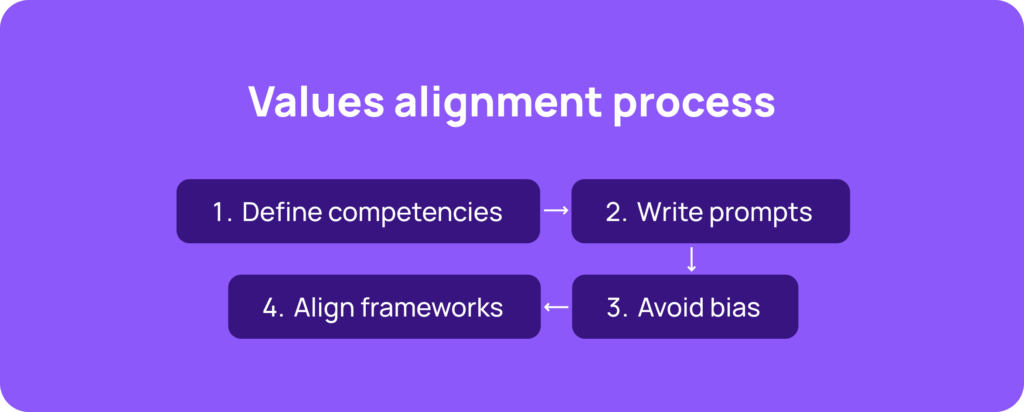

Design your process for values alignment

Talent Management and HR teams should hire for values alignment, not “cultural fit“.

To do so, map each role to five to seven competencies that predict success. Examples include customer focus, teamwork, learning agility, ownership, and inclusion-in-action.

Then, write behavioural and situational prompts that probe these competencies directly. Avoid insider jargon and vibe-based queries that prevent data-driven insights and invite bias.

Also, align your framework to validated models. Sapia.ai’s competency framework is a proven way to translate job requirements into measurable behaviours. The aim is to assess someone’s ability to do the job and thrive in your environment, not to see if they mirror internal talent.

Candidate experience by design

Does your hiring process prioritise potential candidates and their needs?

You can design a top-level candidate experience by setting clear time expectations upfront, supporting mobile access and low-bandwidth environments, and providing multilingual flows.

You can also provide post-assessment feedback for everyone, not just to shortlisted candidates. Even a short, constructive message improves sentiment and protects your employer brand.

Finally, enable self-serve rescheduling so candidates can complete assessments when it suits them. And publish timelines and next steps immediately after submission to prevent ghosting and keep candidates engaged. These things are key to proper workforce management.

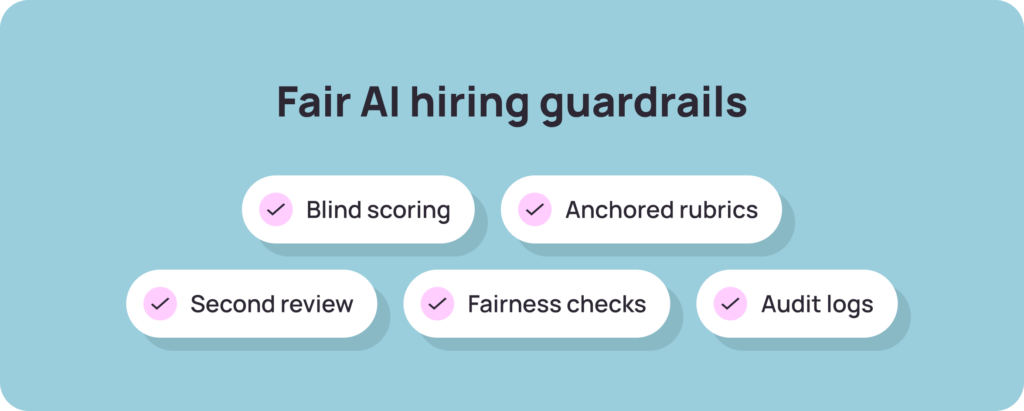

Guardrails, fairness, and explainability for hiring teams

You need to outline ethical AI practices before you use AI tools. Here’s what we suggest:

- Blind first-pass scoring to remove names, photos, and CV details from initial reviews

- Anchored rubrics to ensure every scorer applies the same criteria to every candidate

- Second readers to help assess borderline cases and make better hiring decisions

- Fairness checks to assess representation by stage and adverse-impact ratios

- Exportable audit logs to ensure compliance and avoid potential legal issues

Also, clarify acceptable use of AI for candidates. Many will use AI tools to draft responses. That’s fine for text-based assessments as long as the content reflects their own judgment.

Lastly, retain “human-in-the-loop” overrides with documented rationale for every hire and rejection. AI-powered tools should support decisions; they shouldn’t make them.

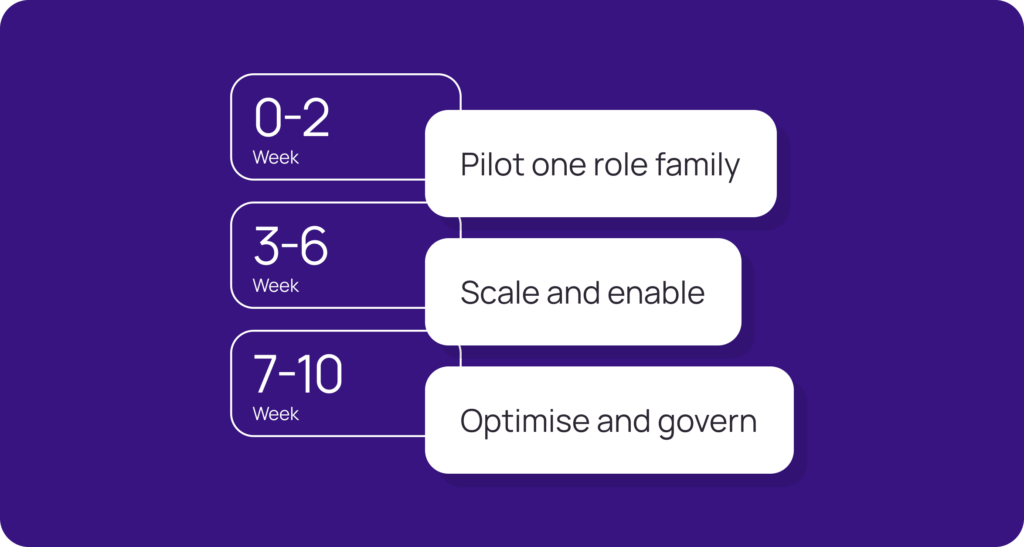

A fast and light implementation roadmap

You don’t need a six-month transformation programme to update traditional methods with AI-powered hiring tools. A phased, pilot-first approach will do the trick:

Weeks 0–2 — Pilot one role family

Draft prompts and rubrics for a single high-volume role. Then, switch on interview-first at apply, and tell recruiters to review assessments within 24 hours. Next, automate reminders for incomplete submissions to keep candidates engaged in your hiring process. Good news: Sapia.ai supports overlay pilots without replacing your ATS, so you can test without disruption.

Weeks 3–6 — Scale and enable

Add a short work sample where it’s predictive. Then, develop manager packs that explain what a “good” candidate looks like. Doing so will help recruiters score consistently and make better hiring recommendations. Next, turn on feedback-for-all to close the loop with every candidate.

Weeks 7–10 — Optimise and govern

Review fairness and quality metrics like representation at each stage, score distributions, time-to-hire metrics, and candidate sentiment. Then, tune thresholds based on early retention and performance data. Next, add sites, languages, and role families. Finally, document audit procedures and data retention schedules for compliance and transparency purposes.

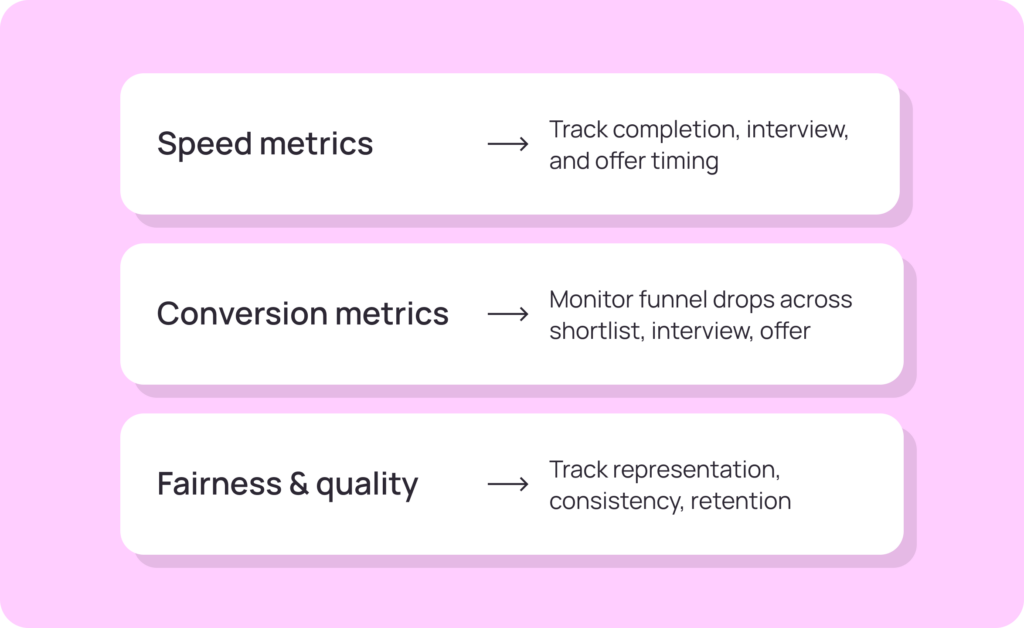

Metrics that prove your AI-powered hiring process is working

Track these metrics to identify friction points before they compound and demonstrate:

- Speed metrics measure process efficiency. Track apply-to-assessment completion (target: ≤48 hours), time-to-first-interview (measured in hours, not days), and time-to-offer (measured in days, not weeks). Speed improves candidate experience.

- Conversion metrics reveal where your funnel leaks. Monitor assessment-to-shortlist rates, interview-to-offer ratios, offer-to-start conversions, and no-show rates. Sudden drops signal poor targeting, candidate experience issues, or both.

- Fairness and quality metrics prove your system promotes equality. Track representation by stage (are underrepresented groups advancing at comparable rates?), inter-rater reliability (are human reviewers applying rubrics consistently?), candidate sentiment or NPS, and 90-day retention. High retention among AI-assessed hires validates predictive accuracy, while low retention signals calibration issues.

What to look for in AI talent assessment tools

Prioritise ATS overlay capability. That way, you don’t have to replace your existing system.

We also recommend structured chat delivery, blind scoring options, explainable shortlists with transparent rationale, and SMS and email nudges to drive completion rates.

Accessibility matters as well. Multilingual support, screen-reader compatibility, and alternatives for candidates who can’t complete standard assessments are important features.

In addition, ensure your dashboards surface the above metrics in real time. And confirm data residency meets your compliance requirements, particularly for GDPR or sector-specific rules.

Last but not least, demo AI-based candidate assessment tools before you invest. During said demos, ask specific questions like: Can you trigger interview-first at apply? Does the first pass run blind? Can you send safe, bulk feedback without legal risk? How fast can you pilot? Can you export full audit trails on demand? The answers you get back will help you make a purchase decision.

The Final word

The winning pattern for AI in talent assessment is straightforward: design a structured, job-related, blind where possible, and explainable process that’s respectful of every candidate.

This combination delivers faster decisions and fairer outcomes without sacrificing rigour or human judgment. After all, AI recruitment tools don’t replace your talent acquisition team. They amplify your ability to identify top talent, reduce bias, and focus human effort where it matters.

See interview-first and rubric-based scoring running on your stack. Book a demo.

FAQs about AI in talent assessment

AI in talent assessment automates delivery and scoring of structured interviews, tests, and simulations. It fits best from apply through shortlist, providing comparable, job-related evidence before live interviews. Human judgment remains essential for final decisions.

Structured interviews use standardised prompts and anchored rubrics, scored blind. Unstructured calls vary by interviewer, opening the door to pedigree bias, personality matching, and inconsistent evaluation. Structure removes noise; AI enforces structure at scale.

Use work samples and simulations when the role requires applied judgment, multitasking, or navigating realistic trade-offs. Tests suit roles where discrete skills (numeracy, writing) predict success. Simulations show how candidates actually perform in real-world job conditions.

Track representation by stage, adverse-impact ratios, and inter-rater reliability for fairness. For predictive validity, monitor 90-day retention, performance ratings, and offer-to-start conversions. Then compare results between AI-assessed hires and traditional hires.

Yes. Overlay solutions like Sapia.ai integrate with your existing ATS via API, to trigger assessments at apply and push scored shortlists into your workflow. No rip-and-replace!

Use templated, rubric-based feedback tied to observable behaviours and competency scores. Avoid vague or subjective language. Many AI-driven candidate assessment platforms generate safe, consistent feedback automatically, reducing legal exposure and improving experience.